How NOT to apply Artificial Intelligence in your business

Don't fall for the sirens' song and start replacing humans with AI without first making sure you are pursuing the proper objectives and metrics

Cost reductions. The Holy Grail for many managers and businesspeople. So many grave mistakes have been made in its name. Every new technology and business trend gets its share of people trying to jump into it in the wrong way, without considering first what they are really trying to achieve and if they are prepared as an organization to do so. Narrow Artificial Intelligence, the current trend, is not an exception.

A couple of weeks ago, an USD$100m entrepreneur became infamous by celebrating having replaced 90% of his Customer Support Representatives workforce with an AI chatbot1 on Twitter. He did it as a marketing stunt, trying to promote the AI chatbot his team had built to deal with his business’ Customer Support tasks, that he was trying to launch as a new product. But instead, he was met with a very negative backlash (even being analyzed in media like Business Insider2), by a large amount of people questioning many things: from the wisdom of his decision, to the way he executed it and its social impact on his former workers.

In a previous publication I have discussed Artificial Intelligence as a tool for Customer Experience Management3, not only from the Customer Support automation capabilities, but as a whole. Narrow Artificial Intelligence (the researchers give this name to the current state of Artificial Intelligence, capable of excelling in specific tasks, but not so good at general ones, as a Human Intelligence might be, or a future Artificial General Intelligence) can be used for many things. Building a Customer Experience strategy requires lots of information gathering, analysis, synthesis and visualization. AI tools can be used to save lots of time and effort on these tasks. The Customer Experience operation itself can be subject to AI tools too: live data collection and analysis, task automations, general classification and, of course, automating interaction with customers in some degree (“chatbots”).

It is not the first time the Customer Support industry makes the same mistake, and I doubt it will be the last.

But I also discuss in my publication that we must be careful on how we apply technology that puts distance between a human representative and a customer that might be angry, confused and in pain. It is not the first time the Customer Support industry makes the same mistake, and I doubt it will be the last. With the advent of Interactive Voice Response, or IVR (“Press 1 to know the state of your order, Press 2 to make a modification, Press 9 to speak with a representative…”) business leaders were sold with the idea of replacing the “expensive” humans with automated telephone menus that were capable of solving customers’ issues 24/7 at a fraction of the cost. Eliminating most of the support workforce became an objective, creating intricate multilevel menus, trying to force customers to find their way to a solution without ever contacting a human representative. If you have more than 20 years, you probably have had to deal with the frustration these kind of menus can produce.

Fast forward a few years later, and we had the same situation with the WhatsApp, Facebook, etc. chatbots. Those were basically IVR menu trees translated into a messaging platform, with some intelligence added. But the results are mostly the same: frustration and anger for the customer. The questions we should be asking ourselves as the ones in charge of the Customer Experience Management in our organizations are: Why does it fail once and again? And why do we keep trying?

To answer these questions, let’s use the recent case of the entrepreneur mentioned as an example for our analysis.

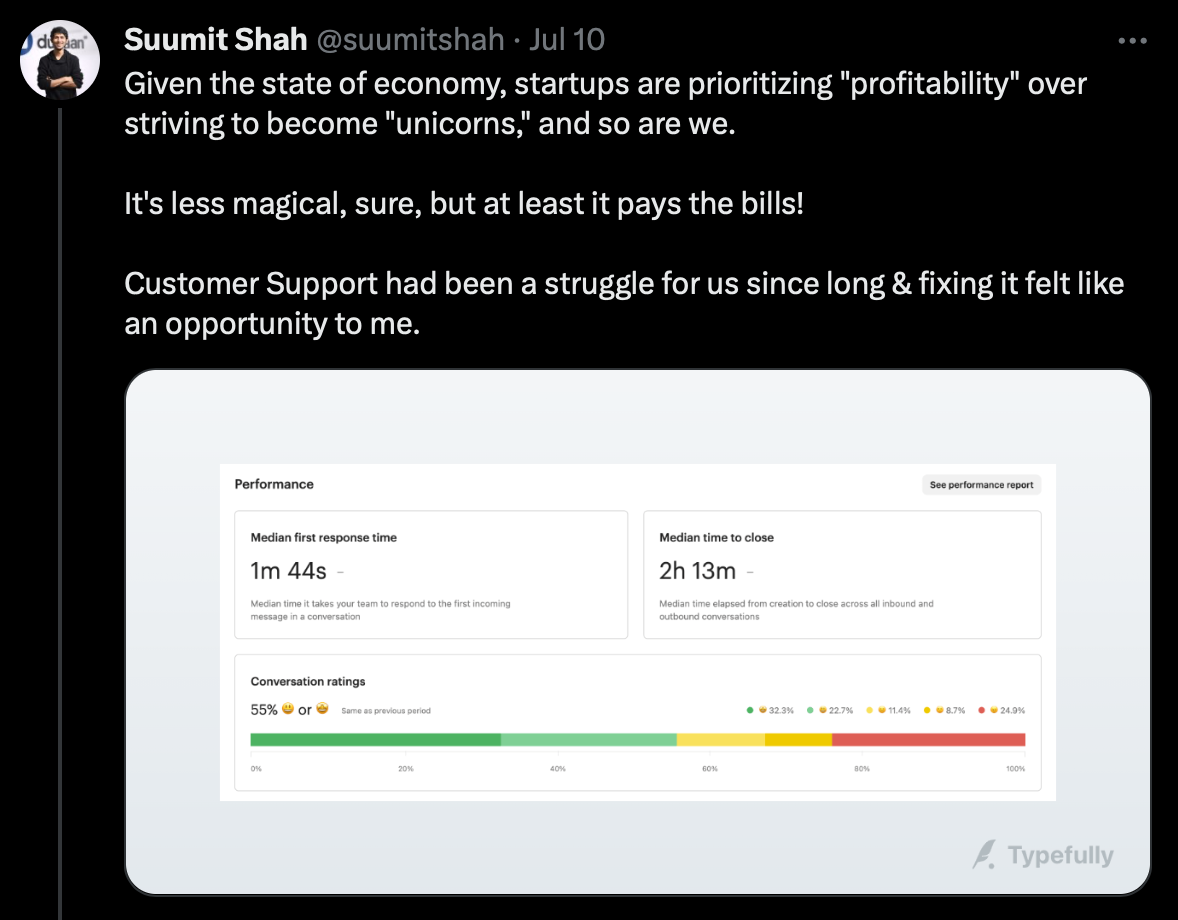

First of all, he clearly states what were his drivers: the metrics he objectively measures and he thinks show he has made progress with the decision made.

Time to first response

Resolution time

Customer support costs

All of those metrics are important. But do you see the pattern there? Those are Customer Support metrics, not Customer Experience ones! All of them are inward facing (looking at the organization), instead of outward facing (looking at the customer). Yes, collecting Customer Experience data is harder than collecting Customer Support data. But it is indispensable, or you might end up shooting yourself in the foot. To do a proper analysis, we must follow the entrepreneur’s reasoning, so let’s keep going.

OK, so he is clearly trying to optimize costs because of the “state of economy”. And he is making a comparison between being “profitable” and “trying to become a unicorn”. Let’s stop here for a moment. The assumption he is taking is that “becoming a unicorn” means spending money into solving properly your customers’ problems, because “unicorns” strive to delight their customers. So, going into “profitable mode” means cutting Customer Support costs, because you are not trying to be a unicorn. Customer Happiness is no longer relevant if you are going for survival in a harsh economy. So it becomes highly important to you, as a business, to close tickets fast, without even checking what is the impact of that newly found speed: was the customer satisfied? was he just sent away with an automated half-baked answer to try by himself, only to come back angrier a day later, or not to come back at all? That’s why you cannot judge the success of these strategies solely by Customer Support metrics. But let’s continue, as there is more.

He asks “Why would someone with tech/product expertise work as a support agent?”. This is a complex question. We are entering the personal preferences and psychology terrain here, and the answers can be as diverse as people are. This is like asking: “Why would someone enter a building in flames to rescue people?”. Having led many people in Customer Support functions I can say that some of them just liked solving other people’s problems with their knowledge. And anyone who has ever taught anything knows that you learn more by teaching than by learning in the first place. Others do it because they prefer to solve issues than to use the product for its intended purpose. “Why a racing car mechanic tunes racing cars instead of driving them?”. And we can continue listing personal motivations. Try to ask your Customer Support team this question, and get surprised.

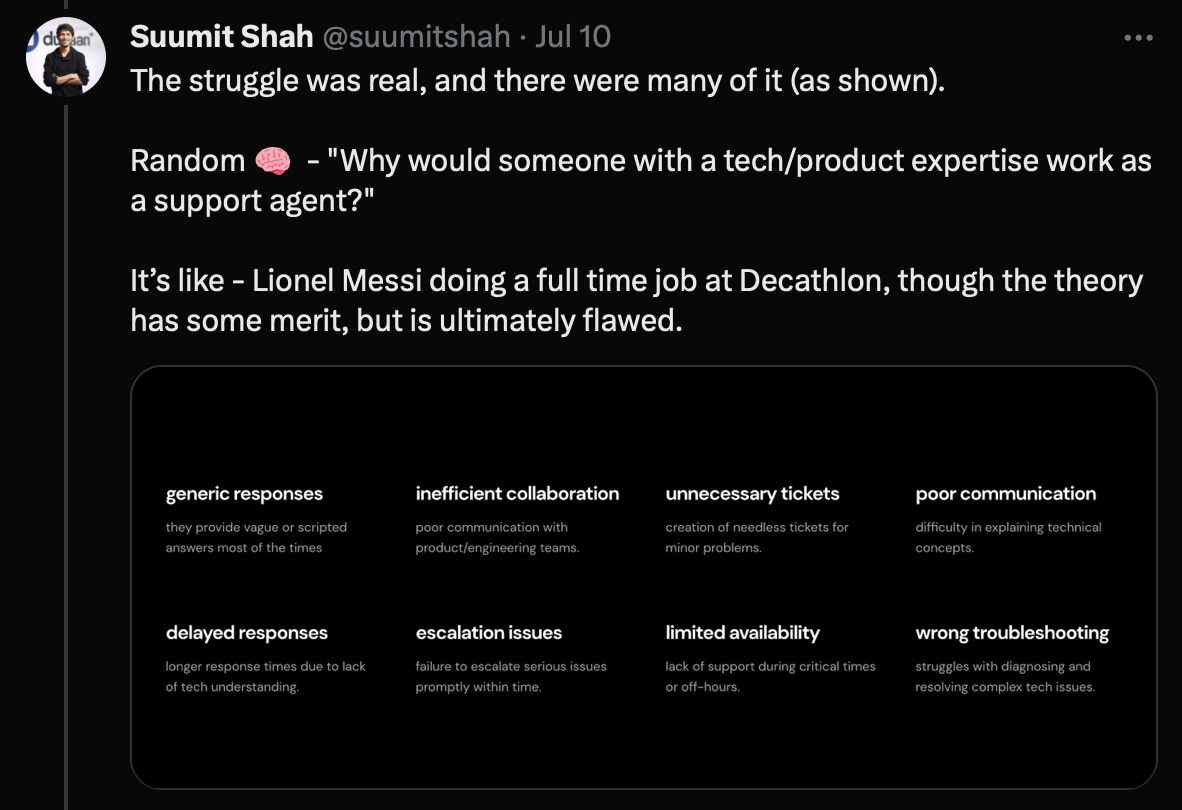

But he also lists some “reasons” to consider human Customer Support representatives as a liability, when compared to AI ones. Let’s work over those one by one.

Generic responses. “They provide vague or scripted responses most of the time”. Yeah, but that’s why Customer Support tiers and escalation exist. Imagine that you have a large user base (like a telecom or a university). You have TONS of people making the same small mistakes day after day. So you can expect to give the same answers constantly. That’s why you have a Tier 1 Customer Support, which handles those massive amounts of requests. Can you apply Artificial Intelligence here to automate? Sure. But you must have the proper metrics in place to know that you are not breaking things more than they currently are.

Inefficient collaboration. “Poor communication with product/engineering teams”. And the AI chatbot is supposed to communicate better? He is stating a problem with his Customer Support escalation process. That is not going to be solved with a bot, but by fixing the process.

Unnecessary tickets. “Creation of needless tickets for minor problems”. Minor problem according to who? The business? A worldwide statistic found that in average only 5% of customers having issues report them. 95% just live with the issue and complain with others about it (bad Word of Mouth). A “minor problem” as seen from the business, can be a dealbreaker for the customer. No ticket is unnecessary. You should, instead, be trying to encourage your customers to let you know what is wrong with your product or service, so you can fix it.

Poor communication. “Difficulty in explaining technical concepts”. Again, that’s why Customer Support tiers exist. If you have a low cost/lowly paid Tier 1 Customer Support representative, trained to only answer things from the knowledge base, you cannot expect him to give a lecture on software engineering and the inner workings of your product. And probably the customer doesn’t want to hear that either. They just wanted to use your product for what they purchased it, and having it failed in delivering that, at least to have the issue fixed quickly and continue with their lives. Do you want an AI chatbot to spew paragraphs of elaborate technical explanations to a customer that is probably time-pressed and for sure didn’t expect your product to fail and require support?

Delayed responses. “Longer response times due to the lack of tech understanding”. Again, this is why Tier 2 and Tier 3 Customer Support Representatives exist. Are you asking from your Tier 1 representatives to have deep knowledge of the product? Or perhaps those tickets should just be escalated? How do you decide if something escalates? Can your AI chatbot do it without first angering the customer by making him waste time?

Escalation issues. “Failure to escalate serious issues promptly within time”. Same as before. Can an AI chatbot promptly determine that it will be unable to service the customer, so it can escalate the issue? Would you place a complicated voice menu in front of a medical emergency telephone number? Surprisingly, I know several that do that. Currently AI is capable of determining the “sentiment” of a user, either by his voice or the words it is using. But, would you risk it? For non life-threatening services it could be attempted but, again, you must measure the results from the customers’ side.

Limited availability. “Lack of support during critical times or off-hours”. Most Customer Support services provided by businesses also have SLA tiers. If someone requires 24/7 support, he needs to pay for that SLA tier. If you are trying to give everyone 24/7 support, then it is a business decision and it comes with a cost. Some issues will be fixable by Tier 1 representatives (that could be potentially be replaced/supported by AI chatbots, online FAQs and knowledge bases, etc.) but others will still require Tier 2 and 3 experts. So the human availability problem won’t go away unless you plan for it. And for its costs.

Wrong troubleshooting. “Struggles with diagnosing and resolving complex tech issues”. Again, are you asking the proper Tier of support? Is an AI chatbot going to be able to troubleshoot better than a human? Do you even understand how GPT-built LLMs work and how they produce their answers? You are placing too much faith on it, my friend.

From what the entrepreneur publishes I can perceive an interesting struggle in his reasoning:

Why to have experts (Lionel Messi) as troubleshooters in front of my customers? Let’s get rid of them! They are costly!

but then:

Why are the cheap guys I’m trying to place in front of my customers aren’t able to: give less generic responses, collaborate like project managers, communicate like CEOs, respond quickly by solving complex technical issues from memory, be available 24/7, etc.?

His business has probably accumulated over time tickets because of bureaucracy, bad escalation policies, poorly trained Tier 1 Customer Service Representatives, insufficient Tier 2 and 3 product experts. He is also only monitoring inward facing metrics, so he really doesn’t know what the customers’ sentiment about his Customer Support, and the whole Customer Experience around his business are. And without a baseline on this, he just replaced 90% of his Customer Support staff (probably all of the Tier 1s, and some of the Tier 2 and 3). The result?

Yeah, the business experienced an abrupt consumption of all the tickets that probably were stuck because of bad processes over time, but we know nothing about the resolution level of the issues, the customer sentiment after he was serviced, if the solution provided was the right one, if there are other issues not expressed, etc. For all we know, the chatbot might be giving hallucinated answers to the customers, who angry after wasting their time, don’t even come back to try again, adding themselves to the 95% of people who doesn't ever complain (and the 96% of people that will leave you as soon as they can because of bad Customer Experience4, according to Forbes).

Don’t get me wrong. I am an Artificial Intelligence enthusiast, and have dabbled with it for many years now. And that’s exactly the reason why I know how it has been evolving over time, and its current limitations. It is an excellent and powerful tool when used under human supervision. It can help to automate many processes, almost eliminate effort for certain type of tasks, and provide timely information that previously would have taken hours or days of human work. That’s the reason it is tempting to place it in front of information hungry customers, who are trying to solve an issue with a product. But every customer is different, and also every customer is in a different situation at the moment of requesting support. The absolute lack of empathy of an AI chatbot can be, on itself, a cause of anger for a customer that is in a dire circumstance because of our product. AI cannot do empathy (yet). Also, the personal experience that a human representative brings to the table on each interaction can have the magic required for a quick and satisfactory resolution. So, by replacing humans with AI for human interaction roles we are leaving behind the many human frailties (like the need to sleep), but also the many human strengths (like empathy, personal experience and even instincts).

Faster and wrong will always be worse than slower and right

If we are going to try to introduce Narrow Artificial Intelligence into our Customer Experience workflows, we need to first be sure we are measuring the right things. AI can make things happen faster. But faster and wrong will always be worse than slower and right. So, as with everything related with Customer Experience, we measure, then we try, then we measure again, then we adjust for better results. Embrace an Agile approach for Customer Experience Strategy implementations. Embrace Agile Objectives and Key Results definition and measuring. First apply AI in the areas that are completely under your control (like internal processes) so you know its benefits and deficiencies (internal customer support perhaps?). Then try it outside, and always actively measuring the impact (the final customer impact, not just some ticket closure metrics).

Thank you for reading this article, and if you consider it is being of help to you, please share it with your friends and coworkers. I write weekly about Technology, Business and Customer Experience, which brings me lately to write a lot also about Artificial Intelligence, because it is permeating everything. Don’t hesitate in subscribing for free to this publication, so you can keep informed on this topic and all the related things I publish here.

As usual, any comments and suggestions you may have, please leave them in the comments area. Let’s start a discussion on this topic.

Cheers.

When you are ready, here is how I can help:

Check my FREE webinar (if you haven’t already): “Three Little Secrets that will Improve your Bottom Line”

After that one, check the follow up webinar: “Your Market Damage Model will Save you Money”

If you like them, you can get my introductory course “Customer Experience 101” (don’t forget to use your 50% discount from being a newsletter subscriber)

References

Dukaan CEO lays off 90% of its Customer Support Representatives to replace them with AI

An e-commerce CEO is getting absolutely roasted online for laying off 90% of his support staff after an AI chatbot outperformed them

https://www.businessinsider.com/ai-chatbot-ceo-laid-off-staff-human-support-2023-7

Artificial Intelligence for Customer Experience

https://alfredozorrilla.substack.com/p/artificial-intelligence-for-customer-experience

Ninety-Six Percent Of Customers Will Leave You For Bad Customer Service